A properly designed virtualized SQL Server instance running in a VM with Windows Server (or Linux), using vSphere is crucial to the successful implementation of enterprise applications.

One main difference between designing for performance of critical databases and designing for consolidation, which is the traditional practice when virtualizing, is that when you design for performance you strive to reduce resource contention between VMs as much as possible and even eliminate contention altogether. After a VM is presented and begins taking SQL load, continuous monitoring should be implemented and adjustments made from the original baseline. Right-sizing a VM is a complex process and wise judgment should be made between over allocating resources and underestimating the workload requirements.

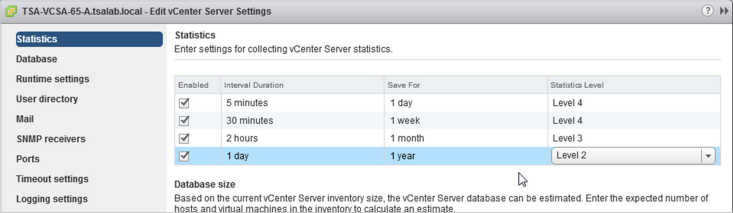

To achieve a significantly longer retention of increased granular metrics, the following statistics levels are recommended. (The default vCenter server configuration is set to a base level of statistics collection).

VMware recommended practices for designing and implementing your vSphere environment with the goal of being optimized for best SQL Server performance.

SQL SERVER ON VMWARE VSPHERE

Best Practices Checklist

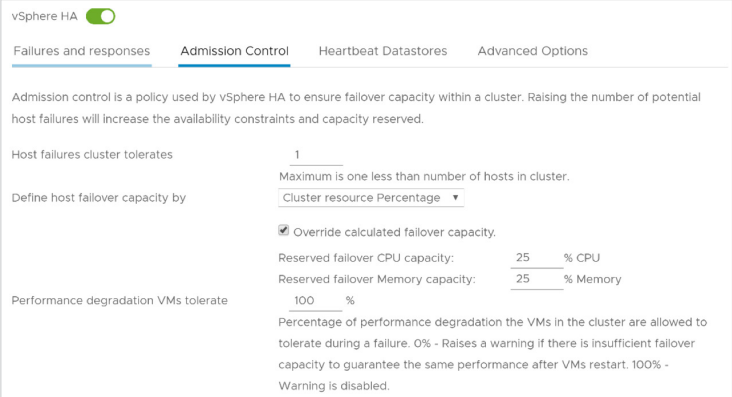

1. vSphere HA admission control can be configured to enforce the reservation of enough resources, so that the ability to power on mission-critical SQL Server workloads is guaranteed. Ensure that enough spare resources on the host cluster exists to withstand a predetermined number of hosts removed from the cluster, both for planned and unplanned scenarios.

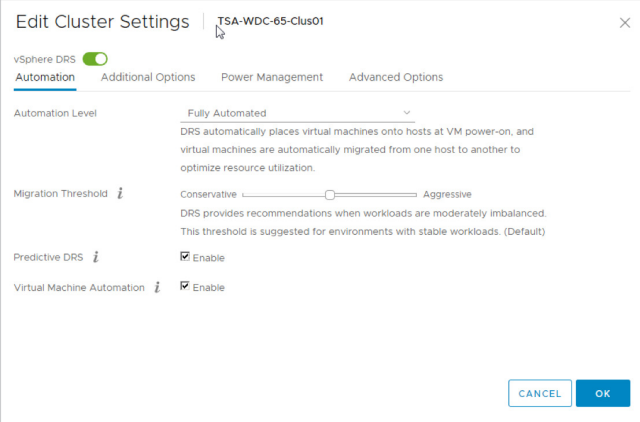

2. VMware recommends to enable DRS functionality for a cluster hosting SQL Server workloads

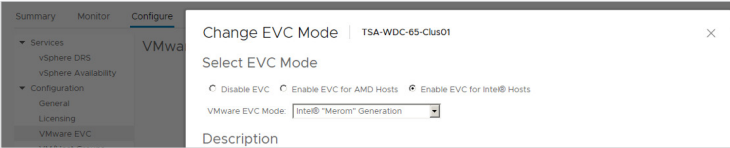

3. Avoid enabling EVC without proper use case – hiding certain CPU features may affect performance of a virtualized SQL Server instance.

4. Resource pools should not be used as folders for virtual machines. Incorrect usage of resource pools, especially nested resource pools, can lead to reduced performance of the virtual machines. Never combine a VM and a Resource pool in the same level of the hierarchy—it will lead to a VM having the same share as the whole Resource pool.

5. Update the BIOS/UEFI firmware on the physical server to the latest version, and make sure all the I/O devices have the latest supported firmware version.

6. The following BIOS/UEFI settings are recommended for high-performance environments (when applicable):

- Enable Turbo Boost.

- Enable Hyper-Threading.

- Verify that all ESXi hosts have NUMA enabled in the BIOS/UEFI. In some systems (for example, HP Servers), NUMA is enabled by disabling node interleaving. Consult your server hardware vendor for the applicable BIOS settings for this feature.

- Enable advanced CPU features, such as VT-x/AMD-V, EPT, and RVI.

- Follow your server manufacturer’s guidance in selecting the appropriate Snoop Mode.

- Disable any devices that are not used (for example, serial ports).

- Set Power Management (or its vendor-specific equivalent label) to “OS controlled” (or its vendor-specific equivalent label). This will enable ESXi hypervisor for controlling power management based on the selected policy.

- Disable all processor C-states (including the C1E halt state). These enhanced power management schemes can introduce memory latency and sub-optimal CPU state changes (Halt-to-Full), resulting in reduced performance for the VM.

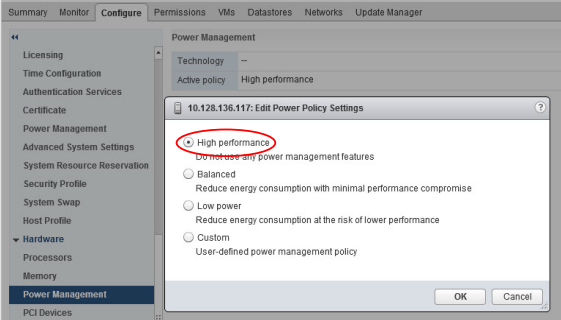

7. Previous versions of ESXi default to the “High Performance” power scheme. vSphere 5.0 and later defaults to the “Balanced” power scheme. Power scheme should be changed to “High Performance”.

Browse to the host in the vSphere Client.

- Click Configure.

- Under Hardware, select Power Management and click the Edit button.

- Select “High Performance” power management policy for the host and click OK. The policy selection is saved in the host configuration and can be used again at boot time. You can change it at any time, and it does not require a server reboot.

8. The total number of vCPUs assigned to all the VMs should be no more than the total number of physical (not logical) cores available on the host machine. Taking a more conservative sizing approach helps rule out CPU resource contention as a possible contributing factor in the event of sub-optimal performance when virtualizing SQL Server implementations. After you have determined that there is excess capacity to be used, you can consider increasing density by adding more workloads into the vSphere cluster and allocating virtual vCPUs beyond the available physical cores.

9. ESXi makes conscious CPU management decisions regarding mapping vCPUs to physical cores, taking Hyper-threading into account. VMware recommends enabling Hyper-threading in the BIOS/UEFI so that ESXi can take advantage of this technology.

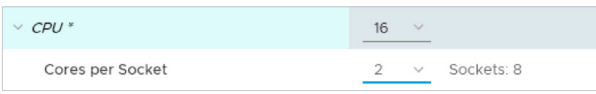

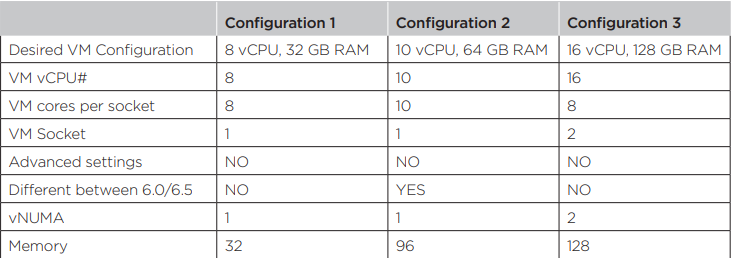

10. Reflect the underlying hardware configuration for configuring Cores per Socket ratio. Care should be taken to get the right vNUMA topology exposed to a VM.

Example:

- A server with a total of 16 CPU cores and 192 GB RAM (8 cores and 96 GB of RAM in each pNUMA node) is used to host a VM with 16 vCPU. “Cores per Socket” is set to two (2). As a result, a vNUMA topology with eight (8) NUMA nodes is exposed to the VM, which is suboptimal.

- Use configurations examples listed to properly assign vCPUs to a VM

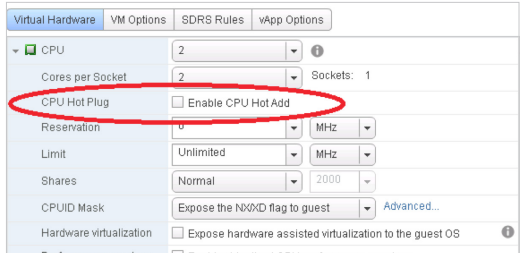

11. VMware recommends not enabling CPU hot plug by default, especially for VMs that require vNUMA. SQL Server Enterprise Edition supports adding a CPU in this way from version 2008 and up; however if a CPU is added in this way, it will affect the vNUMA topology. This might result in degraded performance, because the NUMA architecture does not reflect that of the underlying physical server. Rightsizing the VM’s CPU is always a better choice than relying on a CPU hot plug. (The decision whether to use this feature should be made on a case-by-case basis and not implemented in the VM template used to deploy SQL).

12. VMware recommends not using CPU affinity because it limits the Hypervisor’s ability to efficiently schedule vCPUs on the physical server.

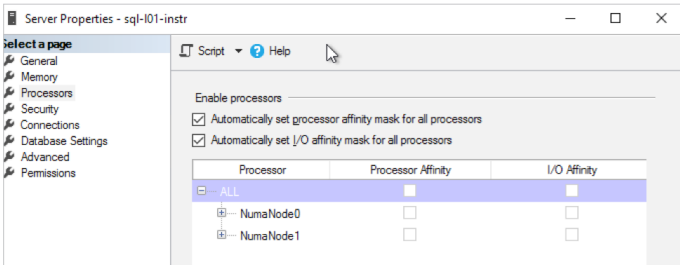

13. SQL Server Enterprise edition is required to utilize NUMA awareness. Avoid the wide NUMA configuration on any other version of SQL Server. If a wide-NUMA configuration is unavoidable, execute extensive performance testing before implementing the configuration. Monitoring should be implemented for important CPU counters after moving to the production. SQL Server Management Studio displays the NUMA topology under processor properties of the server instance.

General Rules (applies to all versions of vSphere starting with 5.0):

- vNUMA is not exposed for any VM having less than nine (9) vCPU assigned (default).

- vNUMA is not exposed to any VM having less vCPU than the size of pNUMA of a host (default).

- vNUMA is not exposed if the “CPU hot add” feature is enabled

14. Avoid overcommitment of memory at the ESXi host level (HostMem >= Sum of (VMs memory + overhead). If a physical server has 256GB of RAM, do not allocate more than that amount to the VMs residing on it taking memory overhead into consideration as well.

15. When collecting performance metrics for making a sizing decision for a VM running SQL Server, USE SQL Server provided metrics (available in the DMV: sys. dm_os_process_memory). Do not use vSphere or Windows Guest OS provided memory metrics (for example, vSphere provided “memory consumed” or, especially, “memory active”).

16. Check the hardware pNUMA memory allocation to identify the maximum amount of memory that can be assigned to a VM without crossing the pNUMA boundaries.

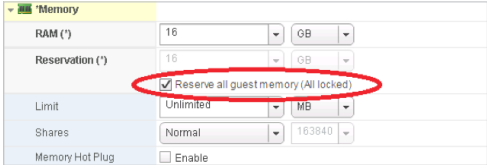

17. Check that the memory reservation is equal to the provisioned memory. Reserving all memory will allow SQL Server to access all of the memory that was provisioned, and disable the creation of the swap file which saves disk space (especially for VMs with a large amount of memory assigned). Swapped VM memory will stay swapped, even if congestion conditions are gone. The goal is to eliminate any chance of paging from happening.

18. Support of PMem was introduced in the vSphere version 6.7 and can be combined with a native PMem support in Windows Server 2016 and SQL Server 2016 SP1 and higher, increasing performance of high-loaded databases. After a vPMem device is exposed to a VM with Windows Server 2016 OS OR Higher, it will be detected as a Storage Class Module and should be formatted as a DAX volume. SQL Server can use that DAX volume to enable “tail-of-log-cache” by placing an additional log file on a SCM volume configured as DAX. Only 20 MB of PMem space is required (SQL Server will use only 20MB to store the log buffer), one PMem module could be efficiently shared between multiple VMs running on the same host.

ALTER DATABASE <dbname> ADD LOG FILE (NAME = <Daxlog>, FILENAME = ‘<PathFile>’, SIZE = 20 MB)

19. Some SQL Server workloads are more sensitive to network latency than others. Virtual Networking Traffic types should be separated to keep like traffic contained to designated networks. vSphere can use separate interfaces for management, vSphere vMotion, and network based storage traffic. Additional interfaces can be used for VM traffic. Within VMs, different interfaces can be used to keep certain traffic separated. Use 802.1q VLAN tagging and virtual switch port groups to logically separate traffic. Use separate physical interfaces and dedicated port groups or virtual switches to physically separate traffic. If using iSCSI, the network adapters should be dedicated to either network communication or iSCSI, but not both.

20. Use the VMXNET3 paravirtualized NIC. VMXNET 3 is the latest generation of paravirtualized NICs designed for performance. It offers several advanced features including multi-queue support, Receive Side Scaling, IPv4/IPv6 offloads, and MSI/ MSI-X interrupt delivery. Follow the configuration guidelines for SQL Server workloads.

21. Utilize multi-NIC vMotion. With multi-NIC vMotion, every vMotion operation utilizes multiple port links, even a single VM vMotion operation. This speeds up vMotion operation and reduces the risk for SDPS on large, memory intensive VMs.

22. Upgrading to the latest version of VMWare Tools will provide the latest enhancements and bug and security fixes for virtual hardware devices like VMXNET3 network adapter or PVSCSI virtual controller. For example, VMware Tools version 10.2.5 enables RSS Scaling to be default for any new installation. It should be double underscored, that the VMware Tools upgrade is essentially a driver upgrade process and it will influence the core OS, hence should be done with care and preliminary testing in a nonproduction environment.

23. VMware recommends upgrading the virtual machine compatibility when new physical hardware is introduced to the environment. Virtual machine compatibility upgrade should be planned and taken with care. If for some reason the VMware Tools are not installed, ensure they are installed to get the best performance from SQL Server.

24. Thin provisioning with SQL Server:

- Configure the vmdks as eagerzeroedthick to avoid overhead I/O for space provisioning. Systems prior to Server 2008 may need the partitions aligned for additional performance. See manufacturer for details on this process.

25. Utilize the VMware Paravirtualized SCSI (PVSCSI) Controller as the virtual SCSI Controller for data and log VMDKs. The PVSCSI Controller is the optimal SCSI controller for an I/O-intensive application on vSphere allowing not only a higher I/O rate but also lowering CPU consumption compared with the LSI Logic SAS. In addition, the PVSCSI adapters provide higher queue depth, increasing I/O bandwidth for the virtualized workload.

26. Use multiple PVSCSI adapters. VMware supports up to four (4) adapters per virtual machine, and as many as necessary up to this limit should be leveraged. Placing operating system, data, and transaction logs onto a separate vSCSI adapter optimizes I/O by distributing load across multiple target devices and allowing for more queues on the operating system level. Consider distributing disks between controllers.